Every ambitious analytics initiative – whether it’s demand forecasting, inventory optimization, supply chain risk prediction, or even next-best customer recommendations – should begin with the end in mind. In practice, this means starting with the AI-driven outcome your business wants (forecast accuracy, reduced stockouts, optimized supply planning, etc.) and working backward to ensure the data needed for that outcome is ready, accessible, and trustworthy. Achieving this often requires reimagining the data foundation as a set of “Data Products” supported by modern architecture principles (like data mesh) and platforms (like Microsoft Fabric). This strategic approach ensures that your data estate – the complete infrastructure and data assets of your organization – is geared toward delivering measurable business value from AI and analytics.

Why “data products”? Traditional data architectures have struggled to keep up with today’s demands. Many enterprises have centralized data lakes or warehouses that burst at the seams as more sources and use cases pile on, creating bottlenecks and shadow systems when teams can’t get the data they need quickly 1. A high-performing data organization, by contrast, treats data as a first-class product: clean, organized, and readily available for consumption. This shift isn’t just technical – it’s a cultural and organizational change. By reframing data assets as products designed to serve specific business needs, companies foster greater ownership, quality, and reuse of data across the enterprise 2. In fact, research shows that next-generation data strategies have tangible payoffs: top “data product” adopters are 3× more likely to generate significant financial impact (20%+ EBIT contributions) from their data initiatives 3.

Below we delve into how an “AI-end-in-mind” approach – underpinned by data products, data mesh principles, and Microsoft Fabric – helps organizations become more agile, scalable, and AI-ready. We’ll look at supply chain forecasting as a key use case (along with other industry examples) and break down how value-focused data products turn raw data into strategic assets for AI-driven decision-making.

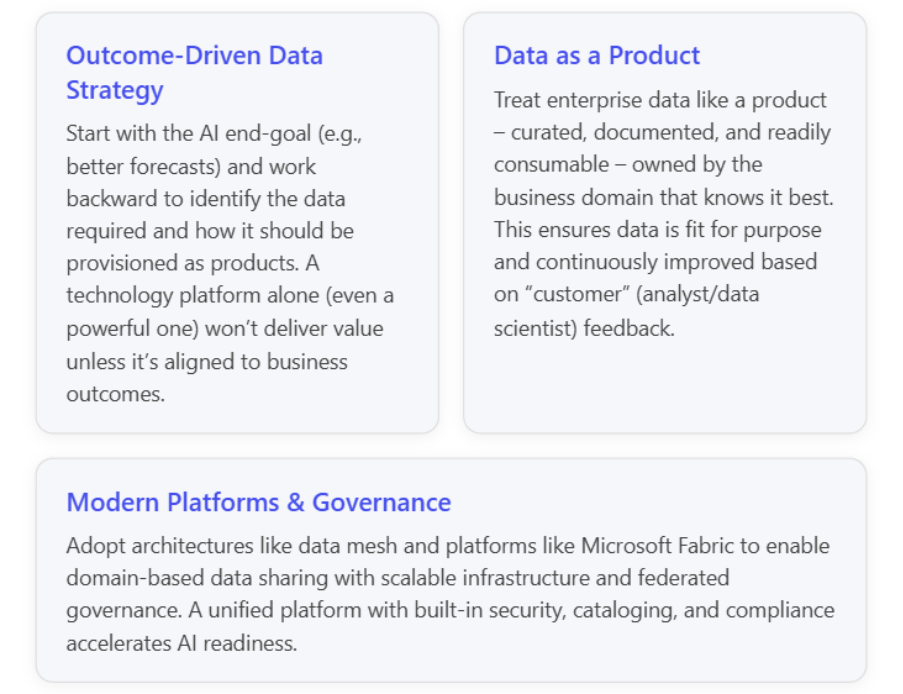

Starting with the End in Mind: AI Outcomes as the North Star

Too often, data teams collect and store data first and only later figure out how to derive value from it. The result can be massive data lakes with unclear purpose, or AI projects that stall due to “data not ready” issues. An AI-end-in-mind strategy flips that script: one begins by clearly defining the business question or AI outcome and then determines what data (and in what form) is needed to answer that question.

Consider a supply chain forecasting scenario. The goal might be to predict demand and inventory levels across a global supply network with high accuracy, so the business can reduce excess stock while avoiding stockouts. Achieving this predictive (and eventually prescriptive) capability requires specific data inputs: historical sales data, current inventory positions, supplier lead times, logistics data, market trends, maybe even weather or social sentiment (for demand spikes). If these data are scattered across siloed systems or not in a usable state, any AI model will struggle. A data scientist might spend 80% of the time wrangling and cleaning data – and still end up with an underperforming model if key inputs are missing or low-quality.

Leading organizations avoid this pitfall by mapping their data needs to the desired outcome upfront. For example, if the outcome is accurate demand forecasting, they identify the critical datasets (point-of-sale transaction history, promotions, socioeconomic indicators, etc.) and ensure those are available as integrated, analysis-ready data products before building complex ML models. This approach was succinctly captured in an internal strategy note: “Clarify the road map to be business-led and data-product-backed… Name the data products per use case, define success with KPIs, and sequence milestones so that project teams and the business get usable outputs early.” 4. In other words, every AI use case (like reducing supply chain disruptions or improving forecast accuracy) should be linked to one or more data products that deliver the necessary data, with clear metrics to track success.

Crucially, starting with business outcomes helps prioritize data investments by value. Instead of boiling the ocean, you might focus on a subset of data that moves the needle for a specific pain point (e.g., out-of-stock rate or forecast error in our supply chain case). By delivering a quick win – say, a pilot that uses cleaned sales and inventory data to improve forecast accuracy in one region – you not only prove the ROI of data work but also create momentum for broader data platform development. This iterative, value-focused ethos ensures that adopting new technology (like Microsoft Fabric) isn’t just “tech for tech’s sake.” It directly supports business KPIs – whether that’s minimizing revenue erosion, improving profitability, or spotting risks earlier in operations 5.

In summary, think backwards from the AI/analytics goal. Identify what data you need, who owns that data, and how it must be transformed or combined. This naturally leads to the concept of organizing data as products that can be built, improved, and delivered to meet those goals. In the next section, we’ll explore what it means to treat data as a product and why it’s a cornerstone of a modern, AI-ready data estate.

Data Products: The Building Blocks of a Modern Data Estate

“Data Product” is more than a buzzword – it’s a paradigm shift in how we manage and deliver data. When we say data is a product, we imply that data should be packaged for consumption, with a clear purpose, high quality, and continuous lifecycle management, just like a software product or a physical good. This idea is central to the data mesh architecture, which champions a domain-driven, decentralized approach to data management. Let’s break down the key traits of data products and why they matter:

- Owned by Domain Teams: Each data product is owned by the team or business domain that knows the data best (e.g. Finance owns the “Financial Ledger” data product, Supply Chain owns the “Inventory Status” data product). This decentralization assigns accountability and leverages local expertise, fixing the problem of a distant central team being unaware of data nuances 6 7. Domain ownership also means data producers are responsible for quality and documentation, not just dumping data over the wall.

- Data as a Product (Not a Byproduct): In the past, data was often an exhaust of business operations – captured as logs or records, then later ETL’d into a warehouse. In a data product mindset, we design data intentionally for reuse and analytics. A data product comes with context (metadata), is discoverable via a catalog, trustworthy (testing/validation in place), and is served in a way that’s easy to consume (e.g. as an API, a cleaned table, or a dashboard) 8. It’s the difference between a raw ingredient and a ready-to-eat meal.

- Interoperable & Composable: Data products are not isolated silos; they are designed to connect and integrate. Because they follow common standards (schemas, governance rules), you can compose higher-order analytics from multiple data products. For instance, a “Demand Forecast” data product might use inputs from a “Sales History” product and a “Marketing Spend” product. The data mesh approach facilitates this by enabling cross-domain data sharing without massive centralized pipelines 9 10. In one case, a company implemented a mesh where data from 30+ source systems (SAP, IoT sensors, supply chain apps, etc.) flowed through cleansing and aggregation stages, and was then exposed via Snowflake as various domain data products for cross-domain analytics 11 12.

- Self-Service Infrastructure: Treating data as a product goes hand-in-hand with providing the platform and tools so that domain teams can publish and manage their own data products. This is the self-serve platform principle: centrally, you provide common infrastructure (data lake, pipelines, catalog, governance guardrails), so the domain experts can focus on the content of their data product. They shouldn’t have to reinvent tooling for every dataset. Modern cloud platforms, as we’ll discuss, are making this far easier with pre-built services for storage, ETL, quality, and even AI model integration.

So why go through the effort to create data products? Because it directly addresses the failures of traditional approaches. In a classic centralized data warehouse scenario, the bottleneck is usually the central IT data team. As demand for new analytics grows, that team becomes overextended – leading to slow turnaround, stale data, and frustrated business units. People resort to rogue Excel sheets and shadow databases, undermining the single source of truth 13. Quality suffers because the further data travels from its source domain, the less context and accountability remain. By contrast, a data product approach keeps data closer to its origin (domain teams maintain it) while making it more accessible enterprise-wide through standard interfaces and governance. It essentially federates the responsibility: many teams each handle a slice of the data (their product), under an aligned set of standards, rather than one team trying to do it all.

✨ Real-World Example: Supply Chain Data Products Pay Off

To illustrate the impact, let’s revisit supply chain forecasting – but this time through the lens of a company that implemented data products. An American consumer packaged goods (CPG) manufacturer (65+ brands) faced a classic challenge: gaps in their distribution data were causing stockouts and lost sales. Data was siloed in many systems, preventing a consolidated view of inventory and hindering real-time analysis 14. Their solution was to modernize the data architecture with a data mesh, effectively creating data products for each key domain of the supply chain (planning, procurement, manufacturing, delivery, sales) 15.

Sigmoid (a data consultancy) helped this CPG firm implement domain-specific pipelines and treat “data-as-a-product” across these areas. Instead of a single monolithic warehouse, they built a decentralized but connected system: data from ~30 sources (SAP ERP, Blue Yonder, Oracle transport management, IoT sensor feeds, etc.) was ingested and processed through Bronze–Silver–Gold layers (raw to curated) on a cloud lake (ADLS) and Snowflake platform 16 17. Each domain (plan, procure, make, deliver, sell) had its own data hub but with interoperability for a 360° view 18. Crucially, all the refined data was cataloged with business metadata for discovery, and a “data mesh layer” on Snowflake allowed analysts to search and retrieve data products (much like shopping for products online) for various use cases 19 20. On top of this, they built custom dashboards and even embedded ML models for prescriptive insights (e.g. optimizing supply chain scenarios).

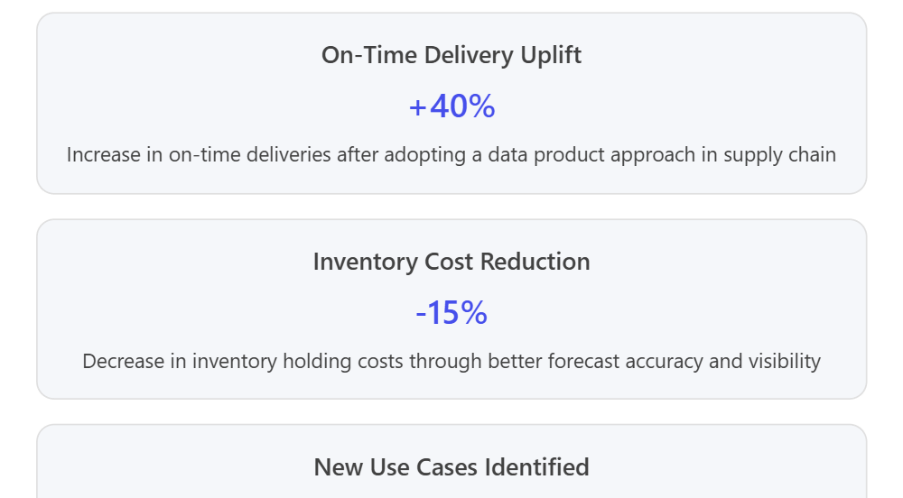

The results were impressive: Inventory costs dropped ~15% thanks to better alignment of stock levels with demand 21. They saw a 40% increase in on-time product deliveries because insights into supply disruptions and delays were surfaced faster 22. The data mesh approach also led to 60% higher data usability across domains (people could find and use data beyond their silo) and uncovered 3× more new analytics use-cases that had previously been obscured or impossible with siloed data 23. In short, treating supply chain data as a set of products – and building a modern data estate to support that – directly translated into business outcomes: fewer stockouts, lower carrying costs, and a more proactive supply chain. The business team could “identify disruptions which might impact operations” much more quickly and adjust plans accordingly 24, and they significantly reduced time-to-market for new analytical insights as well 25.

Such success stories aren’t limited to supply chain or manufacturing. Financial services firms are also embracing data products to become more data-driven. For example, Saxo Bank (a European online investment bank) partnered with ThoughtWorks to implement a data mesh – they built a “data workbench” where internal data assets are searchable and have product-like descriptions (with usage stats and user feedback) 26. This made data transparent, trustworthy, and easily shareable across the bank. The outcome? Saxo saw a reduced cost of customer acquisition, more efficient operations, and stronger compliance (fewer regulatory issues) after deploying their data product catalog, since every team could find the data they need and trust its lineage 27. In pharmaceuticals, an organization like Gilead Sciences has taken a similar route — focusing on “managing their data as a product” and adopting cloud-first, domain-oriented architecture to accelerate R&D insights 28. And in manufacturing, companies are productizing IoT sensor and maintenance data to feed predictive maintenance AI models, leading to less downtime and more optimal asset utilization 29. Across these diverse industries (CPG, banking, pharma, industrial), a common pattern emerges: data products create a flexible, scalable data foundation that directly supports AI and analytics goals.

Traditional vs. Data Product-Oriented Architecture

It’s helpful to contrast the legacy approach to enterprise data architecture with the new “data product” approach (often enabled by data mesh principles). Below is a comparison across a few key dimensions that matter to technology leaders: agility, scalability, and AI-readiness of the data environment.

| Aspect | Traditional Data Architecture (Centralized) | Data Product-Oriented Architecture (Domain/Mesh) |

| Agility | Slow and IT-driven. A centralized team must gather requirements, model data, and build pipelines for every new request. This often leads to long lead times for analytics, and business users may face data latency\6. Changes are risky and slow in a monolithic warehouse setup4. | Fast and business-driven. Multiple domain-aligned teams can develop and update their own data products in parallel, without bottlenecking on a central IT queue44. Agile methods (small increments) are easier when each domain handles its slice, resulting in quicker delivery of insights (faster time-to-value). |

| Scalability | Scale limitations emerge as data grows. A monolithic architecture can struggle with diverse data types and huge volumes. Adding new sources stresses centralized ETL pipelines (cost and performance issues)44. Scaling up often means expensive appliances or complex sharding, and any issue can impact the whole system. | Scales out by design. Each data product can leverage cloud-native platforms to scale storage and compute independently (e.g. one domain’s data can be on a lakehouse, another’s in a streaming system, but all accessible)55. Because integration is federated, adding a new domain or dataset doesn’t overwhelm a single pipeline. The architecture can gracefully expand to new use cases, and domain teams can choose the best tools for their needs (within guidelines), all while adhering to common standards for interoperability. |

| AI-Readiness | Delayed and siloed. Data for AI/ML is often not in a usable state – significant wrangling is needed to combine siloed datasets\6. Inconsistent definitions across the enterprise make feature engineering difficult. The central data warehouse might not capture granular or unstructured data needed for advanced AI. Overall, the data isn’t treated with an AI consumer in mind, which slows model development. | Built-in AI enablement. “Data as a product” means data is already curated and documented – a boon for data scientists who can discover and trust features more easily44. Many data product platforms integrate directly with ML tools (e.g., providing ready-made feature sets or streaming data for real-time AI). Because data products cover both structured and unstructured data domains, they provide a richer substrate for AI. Teams can quickly plug data products into ML pipelines, accelerating experimentation. In essence, the organization’s data is prepped to be consumable by AI/analytics with minimal friction. |

Table: Traditional vs. data product–oriented data architectures across key dimensions. The latter fosters greater agility (through decentralization), scalability (through modularity and cloud leverage), and AI readiness (through treating data as an asset to be consumed by ML/AI with minimal prep).

It’s worth noting that moving to a data product architecture doesn’t mean chaos or anarchy in data management. On the contrary, it requires strong overarching governance and standards – but implemented in a federated way. Instead of one central body approving every data change, the governance model shifts to one of guardrails and automated policies that each domain team abides by. This is sometimes called federated computational governance, one of the core principles of data mesh 30. It ensures that while domains work autonomously, they do so in a way that’s compliant with global standards (security, privacy, data quality, interoperability). We’ll discuss next how modern platforms make this federated governance feasible out-of-the-box, whereas it would have been very difficult to enforce consistently in years past.

Data Mesh Architecture & Microsoft Fabric: Enabling Scalable, Governed Data Products

Adopting a data product approach is as much an organizational journey as a technical one. Data mesh provides the organizing principles (decentralize & domain-own your data, treat it as a product, provide self-serve platform, govern federatively) 31, while modern data platforms provide the technical foundation to implement those principles at scale. One of the emerging platforms in this space is Microsoft Fabric, which is particularly interesting to organizations already invested in the Microsoft Azure analytics stack. Let’s explore how Fabric and similar modern architectures support the data product paradigm:

The Data Mesh Principles in Practice

- Domain-Oriented Ownership – How to implement? Start by aligning your data architecture with business domains. This might mean creating separate data pipelines, repositories, or lakehouse tables for each domain area (e.g., Marketing, SupplyChain, Finance), rather than one giant warehouse for all data. Many companies create a “domain data model” or domain data marts. Tools and cloud resources can be segmented per domain (using resource groups, projects, or workspaces aligned to domains). Fabric example: Fabric allows multiple workspaces and Lakehouses; each business domain could get its own workspace to curate its data, while still connecting to the shared Fabric environment. This maps well to domain-specific lakehouses on a unified foundation 32.

- Data as a Product – Ensure each domain team not only ingests raw data but also refines it into polished datasets ready for others. This often involves a medallion architecture (Bronze, Silver, Gold layers) or similar multi-layer processing within each domain to progressively improve data quality 33 34. The Gold layer in particular is where data should be in business-friendly form, validated and documented – essentially the product you publish. Fabric example: Fabric’s Lakehouse and Warehouse capabilities let teams build these layers using familiar SQL or Spark, then share the clean Gold tables across the organization via the OneLake. Additionally, Fabric’s integration with Power BI and upcoming Copilot AI means those data products can be easily turned into reports or even conversational data apps for end-users 35 36.

- Self-Serve Data Platform – This principle is about providing the tooling (pipelines, streams, catalogs, compute) such that domain teams can do data work themselves without waiting on a central team for infrastructure. In the cloud era, this is much easier: you can use managed services for storage, ELT, and analytics that each domain team can operate with minimal DevOps burden. Fabric example: Microsoft Fabric is essentially an all-in-one analytics SaaS: it bundles data integration (Azure Data Factory in new form), a Spark engine, a SQL warehouse, real-time analytics, data science (Python/R) notebooks, and BI, all in a unified experience. A central IT group might set up Fabric and handle initial tenant settings, but then hand over workspace controls to domain teams. Each team can create their pipelines (in Data Factory), their lakehouse tables, do ML experiments, all within a browser-based unified environment. This drastically lowers the friction to implement data products – you don’t need to stitch together half a dozen disparate tools and risk integration issues. (In traditional setups, one had separate ETL software, a data lake, a warehouse, a BI tool – making it complex to manage end-to-end. Fabric integrates these, so hand-offs between raw data to cleaned data to BI dashboard are seamless.)

- Federated Governance – As domains operate more independently, governance must be baked into the platform to avoid chaos. Key needs are: central data cataloging, access control and security, compliance checks, and data quality monitoring that run across domains. Fabric example: Fabric leverages Microsoft Purview for governance, meaning as data products are created, their metadata (schemas, classifications, lineage) can be automatically registered and governed in a central catalog 37. Uniform security is enforced via Azure AD roles and sensitivity labels, so even though different teams publish data, they adhere to company-wide privacy rules. Also, Fabric’s OneLake (a single logical data lake underlying all Fabric workspaces) means there is essentially one source of truth storage with “shortcuts” to share data across domains without duplicating it 38 39. This “single lake” design helps maintain consistent governance – e.g., if the Finance domain shares a table with Sales via a OneLake shortcut, it’s still the same data file under the hood, and things like retention policies or PII masking apply universally.

In summary, a platform like Fabric addresses the hard engineering parts of a data mesh so you can focus on your actual data. It delivers the persistent lake and warehouse storage, the pipelines, and the governance tooling in one package. Another benefit is elastic scalability – since Fabric runs on Azure, you can scale up processing power per domain as needed, and scale it down when not in use, optimizing cost for performance. It’s a far cry from the old on-prem warehouse where you had to over-provision hardware for peak load.

Fabric in Action: Accelerating Data Product Delivery

To make this concrete, imagine our supply chain team from earlier using Microsoft Fabric. They could have a SupplyChain workspace in Fabric where they ingest raw feeds (Bronze) from ERP and sensors into their Lakehouse. Using Fabric notebooks or Spark, they refine it (Silver) by cleaning and joining with, say, master data. Then they create Gold tables for “Daily Inventory by DC” and “Open Orders”. Because Fabric’s OneLake is unified, the Finance workspace can create a shortcut to those Gold tables, combining them with, say, cost data to produce a “Working Capital” data product, and so on. All of these data products are centrally discoverable with Purview, and any authorized data scientist could query them directly using Fabric’s SQL endpoint or even a natural language query via Copilot. The speed at which new combinations can be assembled – without engineering a brand-new pipeline from scratch – is dramatically improved.

This isn’t just theoretical. Many organizations are already on this journey. Microsoft reports that 21,000+ customers started using Fabric within months of its release 40 41, indicating a strong interest in unified data product platforms. At Neudesic (an IBM Company and Microsoft partner), the team observed that simply “spinning up Fabric” isn’t enough – success comes when Fabric is used in service of a data-product strategy aligned to business outcomes 42. Their recommended roadmap includes naming the specific data products to build, aligning them to use cases, and establishing trust and governance from day one (so that the data products are reliable and secure) 43 44. When Fabric (or any modern platform) is deployed with that value-focused game plan, the benefits can be significant. Organizations have reported 10x improvements in certain analytics development times and far easier collaboration between data engineers and data consumers when working in a single Fabric environment, as opposed to siloed tools.

It’s also worth noting that AI itself is driving new requirements for data architecture. The rise of generative AI and LLMs means companies are now looking to integrate unstructured data and vector embeddings into their data estates 45 46. Here again, having a well-governed, productized data layer helps. You might add a vector database or incorporate embeddings as part of a data product (for example, a “Customer Support Corpus” data product with text data ready for an AI chatbot). Companies that adopted data mesh early find themselves in a good position to plug in such new components because they already have clarity on data ownership and quality. A recent McKinsey study noted that companies successfully leveraging gen AI have revised their data architecture and governance, implementing things like vector stores alongside robust data access controls 47 48. The takeaway: a modern data estate built on data product principles is not only good for classical analytics but is also a future-proof foundation for emerging AI technologies.

Driving Value: A Strategic, Visionary Data Estate for AI

Implementing “AI-end-in-mind with data products” is as much a leadership challenge as a technical one. It requires vision to break from the familiar centralized model, commitment to invest in new platforms and ways of working, and cross-functional collaboration between business and IT like never before. But the rewards are clear: more agility in decision-making, greater scalability as data grows, and an organization that is truly AI-ready by design.

To recap the strategic insights for technology leaders:

- Tie Data Initiatives to Business Outcomes: Ensure every data platform project starts by asking “What value will this deliver? How will we measure success?” Use the language of the business (revenue, cost, risk, customer experience) and then map it to data products and AI use cases. This keeps efforts focused and justified. A data estate built this way essentially becomes a portfolio of products, each with a purpose and ROI, rather than a cost center black box.

- Empower Domains, with Guardrails: Shift to a federated operating model. Upskill and equip domain teams to own their data pipelines and products. Simultaneously, establish a central Data Office that provides governance frameworks, enterprise data standards, and shared tools (like Fabric). Regularly convene a data product council or similar body to share best practices across domains and ensure interoperability. Culture-wise, encourage a product mindset – domain teams should treat data consumers as customers, seeking feedback and continuously improving data offerings 49.

- Leverage Next-Gen Platforms: Embrace cloud-native, unified platforms (such as Microsoft Fabric, Databricks Lakehouse, Snowflake with Data Cloud, etc.) that align with the data product philosophy. These platforms remove a lot of heavy lifting and integrate governance and AI capabilities. This allows your teams to focus on business logic and data quality rather than worrying about server specs or software patching. Importantly, take advantage of automation and DevOps for data (DataOps) features – e.g., use infrastructure-as-code to deploy data pipelines, automated testing for data quality, CI/CD for analytics models – to increase reliability and speed 50 51.

- Measure and Celebrate Value Delivery: Track improvements like those in the examples: forecast error reduction, inventory turns improvement, faster cycle times, new use cases delivered, etc. When you can demonstrate, say, a KPI shift (like 15% lower inventory carrying cost 52 thanks to your data initiative), broadcast that success. It builds momentum and buy-in. High-level metrics such as time-to-insight or percent of decisions based on data vs. gut can also show the organization’s transformation. Some companies even monetize their data products externally (selling data or insights to partners), turning the data function from a cost center into a revenue generator – the ultimate validation of the “data as product” concept.

In closing, solving for the AI endgame from the start means your data architecture is never an afterthought or a barrier to innovation. Instead, it becomes a springboard for AI – enabling everything from classic predictive analytics to cutting-edge generative AI applications. As data volumes, variety, and velocities continue to explode, the old centralized ways simply can’t keep up. Organizations that adopt a product-centric, domain-driven data strategy on modern, cloud-based platforms will be the ones to outpace their competitors, much like digital-native “frontier firms” do by building AI into their core processes.

By building a modern data estate with data products at its heart, you ensure that when your company asks complex questions – “What will customer demand look like next quarter?” “How can we optimize our supply chain?” “Which risks should we mitigate proactively?” – the answers are not only achievable with AI, but they are accurate, timely, and trusted. Your data stops being a bystander and becomes a true strategic asset, actively driving the business toward its goals. In a world where every company is striving to be AI-driven, those who start with the AI end in mind and empower it with the right data foundation will lead the way.

Nathan Lasnoski